- The Redis Array is the culmination of over a decade of iterative design and experimentation in software innovation.

- Breakthroughs in open-source software often result from persistent refinement and a deep commitment to elegant design.

- Even minor inefficiencies in high-performance systems can compound under high load, affecting latency and scalability.

- The Redis Array addresses the growing complexity of Redis by introducing a simpler, more predictable data structure.

- The Redis Array’s development journey highlights the importance of patience and perseverance in achieving software innovation.

In a rare glimpse into the long gestation of software innovation, Salvatore Sanfilippo, the original creator of Redis, has unveiled the “Redis Array”—a new data structure that caps over ten years of iterative design, experimentation, and philosophical reflection on software simplicity. Despite Redis being widely celebrated for its speed and versatility since its 2009 debut, the Array project reveals a lesser-known truth: some of the most impactful developments in open-source software take not months, but a decade. Sanfilippo notes that the Array concept was first sketched in 2013, yet only now, after cycles of abandonment and revival, does it approach maturity. This journey underscores a quiet reality in systems engineering: breakthroughs are often not sudden, but the result of persistent refinement, failed prototypes, and a deep commitment to elegant design.

The Burden of Complexity in High-Performance Systems

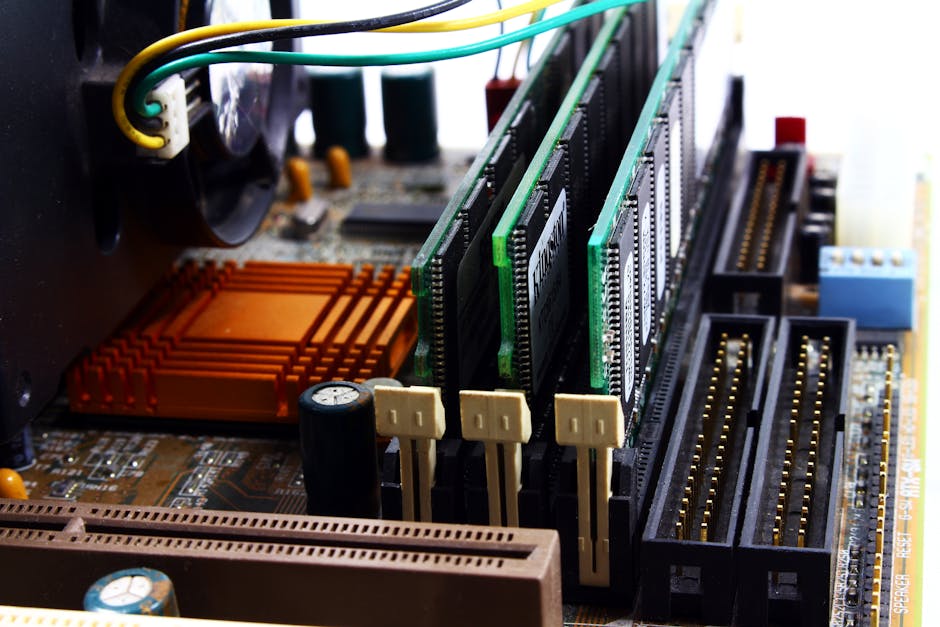

Redis, an in-memory data store known for its lightning-fast operations and support for rich data types like hashes, lists, and sets, has long been a staple in the infrastructure of major tech platforms including Twitter, GitHub, and Snapchat. Yet, as Redis evolved, its complexity grew—especially in how it managed dynamic data structures. Sanfilippo observed that even minor inefficiencies in memory layout or resizing logic could compound under high load, affecting latency and scalability. The need for a simpler, more predictable structure became urgent, particularly for use cases involving time-series data, buffers, or fixed-size collections. The Redis Array emerged from this tension: a bid to reconcile high performance with minimal cognitive and computational overhead. Unlike traditional Redis lists, which are implemented as linked structures, the Array is designed as a contiguous block of memory, enabling faster access and lower memory fragmentation—a decision grounded in decades of systems research.

From Prototype to Redis Core: A Decade of Iteration

The Redis Array’s development path was anything but linear. Sanfilippo first experimented with array-like structures in 2013 while optimizing Redis for real-time analytics workloads. Early versions were abandoned due to inflexible resizing behavior and incompatibility with Redis’s dynamic nature. Over the years, he revisited the idea during sabbaticals, open-source sprints, and personal projects, each time refining the resizing strategy—eventually settling on a hybrid approach that combines amortized doubling with copy-on-write semantics for safety. Key milestones included a 2018 prototype used in a real-time monitoring tool, and a 2021 rewrite that introduced bounds checking and overflow protection. The current implementation, written in pure C, is designed to coexist with existing Redis types and is undergoing integration testing for potential inclusion in a future Redis core release. The project also benefited from community feedback, particularly during discussions on the Redis GitHub repository and Hacker News threads that drew over 30 detailed technical comments.

Why Simplicity Is the Ultimate Optimization

At its core, the Redis Array embodies a growing movement in systems engineering that prioritizes simplicity as a performance and maintainability asset. Research from UC Berkeley and MIT has shown that contiguous memory layouts can reduce CPU cache misses by up to 40% compared to pointer-based structures, directly translating to lower latency. Sanfilippo’s benchmarks indicate that the Array can perform sequential reads up to 3x faster than traditional Redis lists under heavy load. But the benefits extend beyond speed: simpler structures are easier to debug, audit, and secure. As Sanfilippo notes in his blog post on antirez.com, “Every pointer is a potential failure point.” This philosophy echoes broader industry trends, such as the rise of Zig and Rust for systems programming, where safety and predictability are first-class concerns. The Redis Array, therefore, is not just a technical enhancement but a statement on software values.

Implications for Developers and Distributed Systems

The Redis Array could significantly impact developers building high-throughput applications, especially in domains like financial trading, IoT telemetry, and real-time gaming, where predictable latency is critical. By reducing memory fragmentation and improving cache locality, the Array may allow Redis to handle larger workloads on the same hardware, lowering operational costs. Moreover, its design could influence other in-memory databases and data structure libraries. For instance, projects like Apache Ignite or Amazon ElastiCache might adopt similar principles to optimize performance. However, adoption will depend on backward compatibility and the stability of the implementation. While the Array is not intended to replace all list operations, it offers a compelling alternative for use cases where order and performance are paramount.

Expert Perspectives

Industry experts have welcomed the Redis Array as a thoughtful evolution. According to Dr. Jane Levy, a systems researcher at MIT, “Sanfilippo’s work reaffirms that innovation in infrastructure software is often incremental and deeply informed by real-world usage.” However, some developers caution against overgeneralization. A lead engineer at a major cloud provider, speaking anonymously, noted, “Arrays are fast, but they’re not always flexible. You trade dynamic resizing for performance—sometimes that’s worth it, sometimes it’s not.” These contrasting views reflect a fundamental trade-off in systems design: raw efficiency versus adaptability.

Looking ahead, the Redis Array’s journey is far from over. The next phase involves stress testing in production environments, performance tuning, and potential integration into the official Redis distribution. Open questions remain: How will it interact with Redis modules? Can it support atomic operations across shards? As the database landscape evolves with AI-driven workloads and edge computing, the Redis Array stands as a testament to the enduring power of patience, iteration, and the pursuit of simplicity in a world enamored with complexity.

Source: Antirez