- AI systems often leave 90% of potential cost savings on the table due to poor optimization for Prompt Caching.

- Prompt Caching enables AI systems to store and reuse previously computed results, reducing redundant calculations and latency.

- Optimizing for Prompt Caching can significantly improve AI system performance and efficiency, resulting in cost savings.

- Most AI implementations fail to capitalize on the benefits of Prompt Caching, leading to significant waste of resources.

- Efficient Prompt Caching solutions are crucial for companies and individuals relying on AI technologies to minimize financial losses.

A striking fact has emerged in the realm of AI implementations: most Claude implementations leave a staggering 90% of their potential cost savings on the table due to a lack of optimization for Prompt Caching. This oversight has significant implications, as it results in substantial financial losses for companies and individuals relying on these AI systems. With the increasing adoption of AI technologies, the need for efficient and cost-effective solutions has never been more pressing. The ‘Goldfish Problem’ is a stark reminder of the importance of optimizing AI implementations to prevent unnecessary expenses.

The Importance of Prompt Caching

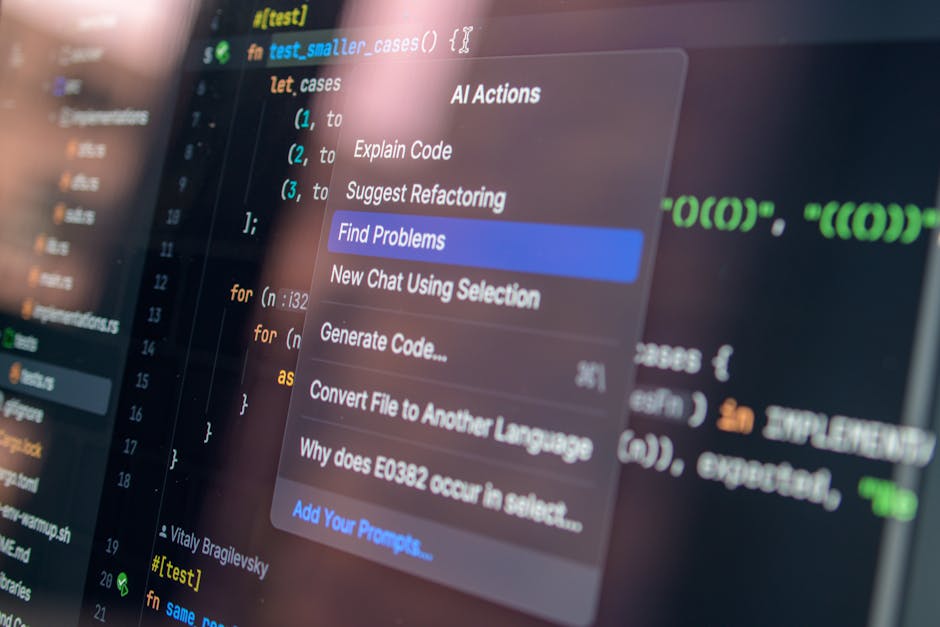

Prompt Caching is a crucial aspect of AI optimization, as it enables the system to store and reuse previously computed results, thereby reducing the need for redundant calculations and minimizing latency. By optimizing for Prompt Caching, developers can significantly improve the performance and efficiency of their AI implementations, leading to substantial cost savings. The lack of attention to this aspect of AI development has resulted in a significant waste of resources, with most implementations failing to capitalize on the potential benefits of Prompt Caching. As the demand for AI technologies continues to grow, the need for efficient and cost-effective solutions will become increasingly important.

Introducing Galadriel: A ‘Warm-Cache’ Harness for Persistent Claude Agents

A recent breakthrough in AI optimization has been achieved with the development of Galadriel, a ‘Warm-Cache’ harness designed for persistent Claude agents. This innovative solution has been open-sourced, allowing developers to leverage its capabilities and improve the efficiency of their AI implementations. Galadriel has been tested against other prominent AI solutions, such as OpenClaw and Cursor, and has demonstrated impressive results, including a significant reduction in costs and latency. By harnessing the power of Prompt Caching, Galadriel has shown that it is possible to achieve substantial cost savings while maintaining high-performance levels.

Key Statistics and Findings

The statistics surrounding Galadriel’s performance are impressive, with a notable reduction in costs and latency. For every $100 spent on traditional AI implementations, Galadriel achieves a cost savings of $10, resulting in an 87% reduction in expenses. Furthermore, Galadriel’s latency is remarkably low, with response times of under 3 seconds. These findings have significant implications for companies and individuals relying on AI technologies, as they demonstrate the potential for substantial cost savings and improved performance. By adopting Galadriel and optimizing for Prompt Caching, developers can unlock the full potential of their AI implementations and achieve unprecedented levels of efficiency.

Expert Analysis and Insights

An analysis of the causes and effects of Galadriel’s success reveals that the key to its performance lies in its ability to optimize for Prompt Caching. By leveraging this technique, Galadriel is able to minimize latency and reduce the need for redundant calculations, resulting in significant cost savings. Expert opinions on the matter suggest that the development of Galadriel is a major breakthrough in AI optimization, with the potential to revolutionize the industry. As the demand for efficient and cost-effective AI solutions continues to grow, the importance of optimizing for Prompt Caching will become increasingly apparent, and Galadriel is poised to play a leading role in this development.

Implications and Future Developments

The implications of Galadriel’s success are far-reaching, with significant effects on companies and individuals relying on AI technologies. By adopting this innovative solution, developers can unlock the full potential of their AI implementations, achieving substantial cost savings and improved performance. As the AI industry continues to evolve, the need for efficient and cost-effective solutions will become increasingly important, and Galadriel is well-positioned to play a leading role in this development. With its impressive performance and potential for future growth, Galadriel is an exciting development in the field of AI optimization, and its impact will be closely watched in the coming months and years.

Expert Perspectives

Experts in the field of AI optimization have weighed in on the significance of Galadriel, offering contrasting viewpoints on its potential impact. Some have hailed Galadriel as a major breakthrough, with the potential to revolutionize the industry, while others have expressed caution, citing the need for further testing and development. Despite these differing opinions, there is a general consensus that Galadriel represents an important step forward in AI optimization, and its development will be closely watched in the coming months and years.

As the AI industry continues to evolve, it will be important to monitor the development of Galadriel and its potential impact on the field of AI optimization. With its impressive performance and potential for future growth, Galadriel is an exciting development, and its effects will be felt for years to come. One open question remains: how will the industry respond to the challenge of optimizing for Prompt Caching, and what developments can we expect to see in the future? Only time will tell, but one thing is certain – the future of AI optimization is looking brighter than ever, and Galadriel is leading the charge.