- Researchers have developed new visualization techniques to reveal the hidden geometry of neural network training.

- The paths networks take during training are smoother and more structured than previously thought.

- Crude 2D slices of the loss landscape often misled researchers about minima and saddle points.

- Understanding the loss landscape is crucial for designing, training, and interpreting artificial intelligence systems.

- New insights could reshape how we approach optimization in deep learning.

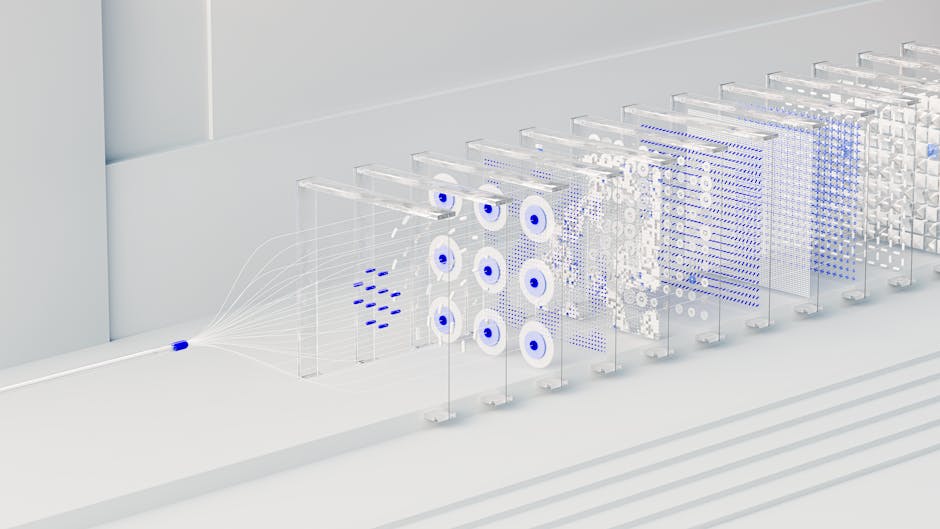

Despite training with billions of parameters, neural networks navigate a hidden terrain known as the loss landscape—a high-dimensional surface where each point represents a model’s performance. Understanding this geometry is crucial, yet human intuition fails in million-dimensional spaces. For years, researchers relied on crude 2D slices, often misleading in depicting the true nature of minima and saddle points. Now, new visualization techniques are revealing that the paths networks take during training are far smoother and more structured than previously thought, challenging long-held assumptions about optimization in deep learning. These insights could reshape how we design, train, and interpret artificial intelligence systems.

The Challenge of High-Dimensional Visualization

Neural networks minimize a loss function across millions of parameters, forming a complex, non-convex landscape filled with local minima, plateaus, and ridges. Traditional 2D contour plots—created by projecting the loss surface onto two random directions—often suggest sharp, isolated minima, implying fragile solutions. However, such visualizations can distort the true geometry due to arbitrary projection choices. The human brain struggles to extrapolate from these simplified views, leading to misconceptions about why some models generalize well while others overfit. With the rise of large language models and vision transformers, understanding this landscape is no longer just academic—it’s essential for improving robustness, convergence speed, and model efficiency in production AI systems.

A New Lens on Loss Surface Geometry

In a landmark study, researchers introduced filter normalization and principal component analysis (PCA)-based projection methods to create more faithful visualizations. By aligning the visualization axes with the most impactful parameter directions, these techniques reveal that many “sharp” minima are actually broad when viewed in the right coordinate system. A team from Google Brain and UC Berkeley demonstrated that when loss is visualized along the first two principal components of parameter updates, the landscape appears remarkably smooth, with wide, connected valleys. This suggests that different training runs often converge to regions that are not just functionally similar but geometrically connected. Their work, published in NeurIPS 2018, laid the foundation for modern loss landscape analysis.

Implications for Optimization and Generalization

The shape of a minimum—whether sharp or flat—has long been linked to generalization. Flat minima, where small parameter changes don’t drastically increase loss, tend to correlate with better performance on unseen data. The new visualization tools confirm that optimization algorithms like SGD (Stochastic Gradient Descent) naturally favor these flat regions, partly due to their inherent noise. Moreover, techniques like learning rate scheduling and batch normalization appear to modify the effective landscape, guiding the optimizer toward broader basins. Recent work from Nature shows that flatness correlates with model robustness, especially in adversarial settings. These findings suggest that loss landscape analysis could become a diagnostic tool for model reliability, much like stress testing in finance.

Practical Impact Across AI Development

These insights are already influencing AI engineering practices. Model developers now use loss landscape visualizations to compare architectures, debug training instability, and assess the impact of regularization techniques. For example, when fine-tuning large language models, practitioners can visualize how pretraining shapes the loss surface, making downstream tasks more navigable. Additionally, companies like OpenAI and DeepMind are reportedly using such analyses to evaluate checkpoint stability and ensemble diversity. In education, these visualizations serve as powerful teaching tools, helping students grasp abstract concepts like convergence and overfitting. As AI models grow more complex, the ability to “see” the training process becomes a critical part of the development pipeline.

Expert Perspectives

While many researchers celebrate these advances, some urge caution. Yoshua Bengio has noted that 2D projections, even when optimized, remain incomplete representations. “We’re seeing shadows of a higher-dimensional reality,” he warned in a 2022 keynote. Others, like Surya Ganguli of Stanford, argue that dynamic landscapes—where the loss surface changes with data or architecture—require time-evolving visualizations. Meanwhile, skeptics question whether flatness alone determines generalization, pointing to recent counterexamples where sharp minima generalize well. The debate underscores that while visualization is powerful, it must be paired with rigorous theoretical analysis.

Looking ahead, the next frontier includes 3D interactive visualizations, real-time monitoring during training, and integration with automated hyperparameter tuning. Researchers are also exploring how loss landscapes evolve across modalities—such as vision, language, and robotics—seeking universal principles of learning. As AI systems become more autonomous, understanding their optimization paths may be key to ensuring they learn safely and predictably. The ability to map and interpret these invisible terrains could ultimately lead to more transparent, trustworthy artificial intelligence.

Source: Reddit