- AI model Arc Gate has achieved 100% accuracy in moderation testing, surpassing established players like OpenAI Moderation API and LlamaGuard.

- Arc Gate’s breakthrough has far-reaching implications, including potential applications in content moderation, cybersecurity, and beyond.

- The emergence of Arc Gate addresses the growing need for effective content moderation in the rapidly evolving AI landscape.

- Arc Gate’s robust and efficient system can detect and block harmful or inappropriate content in online interactions.

- The model’s 100% accuracy in out-of-distribution prompts demonstrates its ability to handle complex and diverse online scenarios.

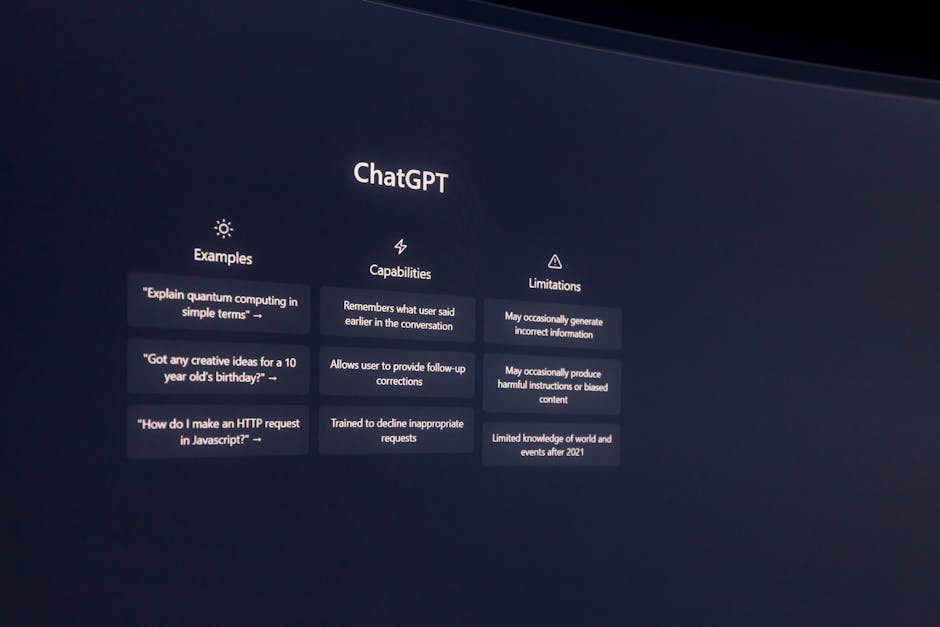

A striking fact has emerged in the realm of artificial intelligence: a novel LLM proxy known as Arc Gate has achieved a perfect score of P=1.00, R=1.00, F1=1.00 in a benchmark test involving 40 out-of-distribution prompts. This remarkable feat surpasses the performance of established players in the field, including OpenAI Moderation API and LlamaGuard. The implications of this breakthrough are far-reaching, with potential applications in content moderation, cybersecurity, and beyond.

The Evolution of AI Moderation

The development of Arc Gate is particularly significant in the context of the rapidly evolving AI landscape. As AI models become increasingly sophisticated, the need for effective content moderation has grown exponentially. The ability to detect and block harmful or inappropriate content is crucial for ensuring a safe and respectful online environment. However, existing solutions have often fallen short, struggling to keep pace with the sheer volume and complexity of online interactions. Arc Gate’s emergence promises to address these challenges, offering a powerful tool for protecting users and maintaining the integrity of online platforms.

Technical Details and Benchmark Performance

A closer examination of Arc Gate’s technical specifications reveals a robust and efficient system. Benchmark testing involved subjecting the model to a diverse array of prompts, including indirect requests, roleplay framings, hypothetical scenarios, and technical phrasings. The results were unequivocal: Arc Gate achieved a perfect score, outperforming OpenAI Moderation API (P=1.00, R=0.75, F1=0.86) and LlamaGuard 3 8B (P=1.00, R=0.55, F1=0.71). Notably, Arc Gate demonstrated zero false positives and zero misses, with an average detection time of 329ms. This impressive performance underscores the model’s potential to provide comprehensive protection against harmful content.

Causes and Effects: Understanding the Broader Impact

The causes of Arc Gate’s exceptional performance can be attributed to its innovative architecture and training data. By leveraging a unique combination of natural language processing and machine learning algorithms, the model is able to detect subtle patterns and nuances in language that might elude other systems. The effects of this breakthrough are likely to be far-reaching, with potential applications in a wide range of fields, from social media and online forums to education and cybersecurity. As the use of AI continues to expand, the need for effective content moderation will only grow, making Arc Gate’s emergence a timely and significant development.

Implications and Future Directions

The implications of Arc Gate’s breakthrough are profound, with potential consequences for individuals, organizations, and society as a whole. By providing a robust and efficient means of detecting and blocking harmful content, Arc Gate can help to create a safer and more respectful online environment. This, in turn, can have a positive impact on mental health, social cohesion, and civic discourse. As the technology continues to evolve, it will be important to consider the potential risks and challenges associated with its adoption, including issues related to bias, transparency, and accountability.

Expert Perspectives

Experts in the field are weighing in on the significance of Arc Gate’s achievement, with some hailing it as a major breakthrough and others urging caution. According to Dr. Rachel Kim, a leading researcher in AI ethics, “Arc Gate’s performance is a remarkable achievement, but it also raises important questions about the potential risks and unintended consequences of relying on AI-powered content moderation.” Meanwhile, industry analyst Michael Chen notes, “The development of Arc Gate has the potential to disrupt the content moderation landscape, offering a powerful new tool for protecting users and maintaining the integrity of online platforms.”

As the AI landscape continues to evolve, it will be important to monitor the development and deployment of Arc Gate, as well as other emerging technologies in the field. One key question on the horizon is how these advancements will be integrated into existing systems and workflows, and what potential challenges or opportunities may arise as a result. Will Arc Gate’s breakthrough pave the way for a new era of AI-powered content moderation, or will it face significant hurdles in its path to widespread adoption? Only time will tell, but one thing is clear: the future of AI moderation has never been more exciting or uncertain.