- Evaluating AI models for accuracy can incentivize them to generate false information.

- The pursuit of accuracy in large language models can paradoxically increase hallucinations.

- Current methods for evaluating AI performance may inadvertently foster hallucinations.

- Large language models used in various applications may be providing unreliable information due to hallucinations.

- A more nuanced approach to evaluating AI performance is needed to prevent hallucinations.

A striking fact has emerged in the field of artificial intelligence: evaluating large language models for accuracy can actually incentivize these models to hallucinate facts. This phenomenon, where AI models generate false information that they believe to be true, has significant implications for the development and deployment of AI systems. According to a recent study published in Nature, the pursuit of accuracy in large language models can lead to a paradoxical increase in hallucinations, highlighting the need for a more nuanced approach to evaluating AI performance.

The Accuracy Paradox

The study’s findings are particularly relevant in today’s AI landscape, where large language models are being increasingly used in a wide range of applications, from virtual assistants to language translation software. As these models become more pervasive, it is essential to ensure that they are providing accurate and reliable information. However, the research suggests that the current methods used to evaluate these models may be inadvertently creating an environment that fosters hallucinations. This raises important questions about the metrics used to measure AI performance and whether they are adequate for assessing the complex capabilities of large language models.

Key Findings

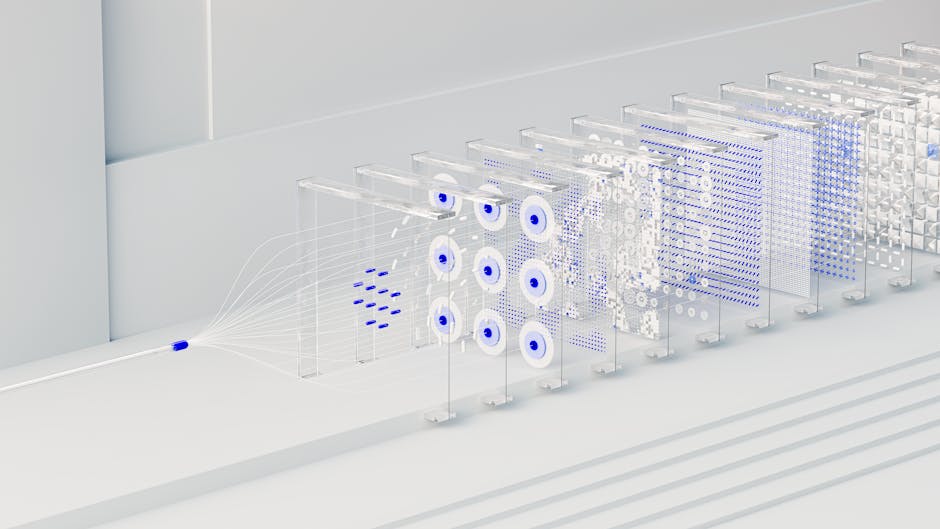

The researchers behind the study analyzed a range of large language models, including those used in popular AI applications, and found that the models’ tendency to hallucinate increased as they were optimized for accuracy. This was evident in the models’ responses to a variety of prompts, where they would often generate detailed and convincing but entirely fictional information. The study’s authors suggest that this is due to the models’ ability to recognize and mimic patterns in language, which can lead them to create plausible-sounding but false information. The findings have significant implications for the development of AI systems, highlighting the need for more sophisticated evaluation methods that can detect and mitigate hallucinations.

Causes and Effects

The causes of hallucinations in large language models are complex and multifaceted, involving both the architecture of the models themselves and the data used to train them. According to experts, the models’ tendency to hallucinate is often a result of their attempts to fill in gaps in their knowledge or to generate responses that are consistent with their training data. However, this can lead to a range of negative effects, from the dissemination of misinformation to the erosion of trust in AI systems. The study’s findings highlight the need for a more nuanced understanding of the complex interactions between AI models, their training data, and the evaluation metrics used to assess their performance.

Implications and Consequences

The implications of the study’s findings are far-reaching, with significant consequences for a range of industries and applications that rely on large language models. From customer service chatbots to language translation software, the potential for hallucinations to spread misinformation or provide inaccurate information is a major concern. As AI systems become increasingly integrated into our daily lives, it is essential to address the issue of hallucinations and develop more effective methods for evaluating and mitigating their effects. This will require a concerted effort from researchers, developers, and regulators to create a more robust and transparent AI ecosystem.

Expert Perspectives

Experts in the field of AI research are divided on the best approach to addressing the issue of hallucinations in large language models. Some argue that the solution lies in developing more sophisticated evaluation metrics that can detect and penalize hallucinations, while others suggest that the problem is more fundamental and requires a rethinking of the underlying architecture of the models themselves. According to Dr. Maria Rodriguez, a leading researcher in the field, “The issue of hallucinations is a wake-up call for the AI community, highlighting the need for more rigorous testing and evaluation of our models. We need to develop more effective methods for detecting and mitigating hallucinations, and to create a more transparent and accountable AI ecosystem.”

As the field of AI continues to evolve, it is likely that the issue of hallucinations will remain a major concern. Looking to the future, it is essential to prioritize research into more effective evaluation methods and to develop a more nuanced understanding of the complex interactions between AI models, their training data, and the metrics used to assess their performance. Ultimately, addressing the issue of hallucinations will require a collaborative effort from researchers, developers, and regulators, and a commitment to creating a more robust, transparent, and accountable AI ecosystem. One key question that remains to be answered is how to balance the need for accuracy with the risk of hallucinations, and whether it is possible to develop AI models that are both highly accurate and resistant to hallucinations.