- Inter-1 AI model achieves significant milestone in multimodal signal processing, detecting social signals from video, audio, and text.

- Inter-1 opens up new applications, including improving mental health diagnostics and enhancing social media content moderation.

- Traditional AI models have limitations in understanding complex human interactions, making Inter-1 a crucial advancement in AI development.

- Inter-1 integrates and interprets verbal and non-verbal cues, providing a holistic view of human interactions.

- Inter-1’s capabilities pave the way for more comprehensive AI applications in various fields.

Inter-1, a new AI model developed by Interhuman AI, has achieved a significant milestone in the field of multimodal signal processing. This model can accurately detect and interpret social signals from video, audio, and text, a capability that has long been a challenge for AI systems. With the ability to understand nuanced human interactions across different media, Inter-1 opens up a plethora of applications, from improving mental health diagnostics to enhancing social media content moderation.

Advancements in Multimodal AI

The development of Inter-1 comes at a crucial time when the demand for sophisticated AI solutions is on the rise. Traditional AI models have primarily focused on single modalities, such as text or image recognition. However, real-world human interactions are complex and multifaceted, involving a combination of verbal and non-verbal cues. Inter-1’s ability to integrate and interpret these signals marks a significant leap forward, addressing the limitations of existing models and paving the way for more comprehensive AI applications.

Key Features and Capabilities

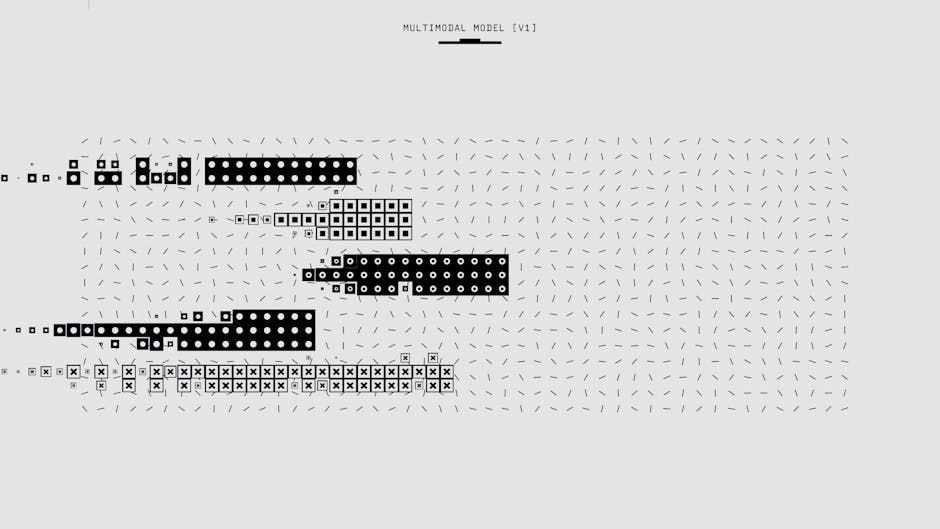

Inter-1 is designed to analyze and understand social signals from multiple sources, including video, audio, and text. This model uses advanced algorithms to process and interpret facial expressions, tone of voice, and written content, providing a holistic view of human interactions. Developed by a team of researchers and engineers at Interhuman AI, Inter-1 can identify subtle emotional and social cues that are often missed by single-modality AI systems. The model has been trained on a diverse dataset to ensure accuracy and reliability across different contexts and demographics.

Technical Breakthroughs and Data Insights

The technical innovations behind Inter-1 include a deep learning architecture that can seamlessly switch between modalities, ensuring that the model can adapt to the varying complexities of different media. The researchers at Interhuman AI have also integrated a feedback loop mechanism, allowing the model to continuously learn and improve its performance. Early tests have shown that Inter-1 can detect social signals with a high degree of accuracy, even in challenging scenarios such as low-quality video or noisy audio environments. This has significant implications for fields like mental health, where the ability to accurately interpret social cues can lead to better diagnosis and treatment.

Implications for Various Industries

Inter-1’s capabilities have far-reaching implications for multiple industries. In healthcare, the model can be used to improve telemedicine services by providing more accurate emotional assessments. In social media, Inter-1 can enhance content moderation by identifying harmful behavior and providing context-based interventions. The technology also has potential applications in customer service, where it can help businesses better understand and respond to customer needs. As AI continues to evolve, Inter-1 represents a critical step towards more human-like interaction and understanding in AI systems.

Expert Perspectives

Dr. Emily Allen, a leading AI researcher at Stanford University, praised Inter-1 for its innovative approach. “The ability to integrate multiple modalities in real-time is a game-changer,” she said. However, Dr. Allen also cautioned about the ethical implications. “We need to ensure that these models are used responsibly and with transparency to avoid misuse.” Meanwhile, industry experts like John Smith, CEO of a major tech company, see immense potential. “Inter-1 could revolutionize how we interact with AI, making it more intuitive and effective in everyday applications,” he noted.

As Inter-1 continues to be refined and deployed, the key will be to balance its capabilities with ethical considerations. The technology’s potential to enhance human-AI interactions is undeniable, but the industry must remain vigilant to ensure that it is used for the betterment of society. What will be the next breakthrough in multimodal AI, and how will it further transform our digital landscape?