- Meta’s proposed facial recognition feature for smart glasses has sparked fierce opposition from over 70 organizations.

- The feature poses a threat to marginalized groups, including abuse victims, immigrants, and LGBTQ+ people.

- The technology could allow predators to track and exploit vulnerable individuals without consent.

- The controversy highlights the delicate balance between innovation and safety in the tech industry.

- Companies must consider the potential consequences of their products on marginalized communities.

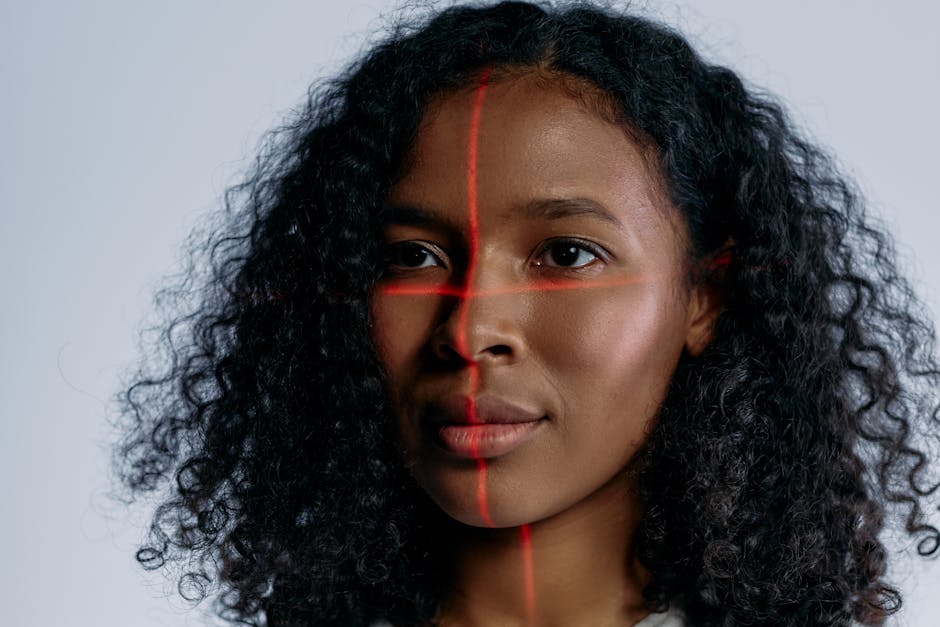

A striking fact has emerged in the world of technology: Meta’s proposed facial recognition feature for its smart glasses has been met with fierce opposition from over 70 organizations, including the ACLU, EPIC, and Fight for the Future. These groups warn that the feature would endanger abuse victims, immigrants, and LGBTQ+ people, among others, by potentially allowing sexual predators to use the technology to track and exploit vulnerable individuals. This development highlights the delicate balance between innovation and safety in the tech industry, and the need for companies to consider the potential consequences of their products on marginalized communities.

The Concerns Surrounding Facial Recognition Technology

The concerns surrounding Meta’s facial recognition feature are multifaceted and far-reaching. At its core, the issue is one of privacy and safety: if the feature were to be implemented, it could potentially allow individuals with malicious intentions to track and identify others without their consent. This is particularly worrying for groups that are already vulnerable to abuse and exploitation, such as domestic violence survivors, immigrants, and LGBTQ+ people. The fact that over 70 organizations have come together to express their concerns about this feature is a testament to the gravity of the situation and the need for Meta to take these concerns seriously.

Key Details of the Controversy

The controversy surrounding Meta’s facial recognition feature is complex and involves a number of key players. The feature itself is designed to allow users to identify and connect with others using facial recognition technology. However, critics argue that this feature could be used for nefarious purposes, such as stalking or harassment. The fact that Meta has not yet implemented robust safeguards to prevent such misuse has only added to the concerns of critics. Furthermore, the company’s history of mishandling user data has led many to question its ability to protect vulnerable populations from the potential risks associated with this feature.

Analysis of the Potential Consequences

An analysis of the potential consequences of Meta’s facial recognition feature reveals a number of troubling trends. For one, the feature could potentially be used to track and exploit vulnerable individuals, such as abuse victims or LGBTQ+ people. This could lead to a range of negative consequences, including increased violence, harassment, and discrimination. Furthermore, the feature could also be used to surveil and monitor marginalized communities, potentially leading to further erosion of trust in institutions and exacerbating existing social inequalities. Experts argue that Meta must take a proactive approach to addressing these concerns and implementing robust safeguards to prevent the misuse of this feature.

Implications for Marginalized Communities

The implications of Meta’s facial recognition feature for marginalized communities are far-reaching and potentially devastating. For abuse victims, the feature could provide a powerful tool for perpetrators to track and exploit their victims. For immigrants, the feature could be used to surveil and monitor their activities, potentially leading to increased deportations and discrimination. For LGBTQ+ people, the feature could be used to out individuals who are not publicly open about their sexual orientation or gender identity, potentially leading to increased violence and harassment. It is imperative that Meta takes these concerns seriously and works to implement safeguards that protect these vulnerable populations.

Expert Perspectives

Experts are divided on the issue of Meta’s facial recognition feature, with some arguing that the benefits of the technology outweigh the risks, while others argue that the risks are too great to ignore. Some experts argue that the feature could be used to improve public safety and prevent crime, while others argue that the potential risks to vulnerable populations are too great to justify its implementation. According to one expert, “The use of facial recognition technology is a complex issue that requires careful consideration of the potential risks and benefits. While the technology has the potential to improve public safety, it also poses significant risks to vulnerable populations, particularly if it is not implemented with robust safeguards in place.”

Looking forward, it is clear that Meta must take a proactive approach to addressing the concerns surrounding its facial recognition feature. This will require the company to engage in open and transparent dialogue with critics and to implement robust safeguards to prevent the misuse of the feature. One open question is whether Meta will be able to balance its desire to innovate with its responsibility to protect vulnerable populations. As one expert notes, “The key to resolving this issue will be for Meta to prioritize the safety and well-being of its users, particularly those who are most vulnerable to exploitation and abuse. This will require a fundamental shift in the company’s approach to innovation and a commitment to prioritizing safety and privacy above all else.”